Over my two-week vacation before I returned to Berkeley for the summer, I read Leonard Susskind and George Hrabovsky’s The Theoretical Minimum: What You Need to Know to Start Doing Physics. This book was based on the first course in a series of courses called the Theoretical Minimum, taught by Leonard Susskind at Stanford targeted at curious older students who had, in their lives, fallen through the cracks of physics education but wanted to learn.

This book was focused on mechanics, but dived pretty rapidly into more advanced formulations of mechanics that I had never really learned in a class. Coincidentally, I’m scheduled to take that class next semester, so I wanted to dive in to get a brief taste of Lagrangian and Hamiltonian mechanics.

So, to start, I set out to simulate the squishy pendulum.

What is the squishy pendulum?

If you haven’t heard of these words, it’s probably because nobody calls the squishy pendulum the “squishy pendulum.” I’m choosing to call it that because that was the first name in mind I could think of when I thought about this system, and I was taken by how silly it is. I shall refrain from using the more conventional name until I actually know what I’m talking about, which I should emphasize that I don’t at present time.

The squishy pendulum is called the “spring pendulum” by less fun, more serious people. A squishy pendulum is just like a normal pendulum, which is a taut string with a point mass attached to the end. However, in place of the taut string, the squishy pendulum has a spring following Hooke’s law which is allowed to stretch or squeeze, with a restoring force trying to bring it to equilibrium.

But to understand how I am going to attempt to simulate this pendulum, I will first calculate the equations of motion of the common pendulum under several equivalent paradigms of classical mechanics.

The Common Pendulum

Part 1: The Newtonian Formulation

The Newtonian formulation of classical mechanics is basically the first thing that you learn in school. We can first talk about Newton’s second law, which reads

where is the force,

is the mass, and

is the acceleration. In addition, for the potential energy

, we have

where the upside-down triangle symbol is like a spatial derivative (I elaborate on this here). A useful picture to have in mind is a landscape representing the potential energy , where a point wants to go in the direction where the potential falls the fastest proportional to how steep it is in that direction (as in, it’s going to fall down the hill, and the steeper the hill is, the more it will be accelerated in that direction).

so we’ve really got

which is all fine and good, if you think about it. Basically, we imagine that, at any given time, a particle looks at what the potential energy looks like on this “landscape” and then accelerates a bit in the direction of the steepest descent. Then it, again, looks at what the potential energy looks like on the landscape, and accelerates again, and so on and so forth.

If we want to solve for the dynamics of the simple pendulum, we simply draw a free-body diagram which indicates all the forces of the problem.

If we assume, as we should, that the string of the pendulum is taut, the pendulum mass must stay on a circle centered around where the string meets the ceiling with a radius of the length of the string. This means that the motion of the pendulum is tangential to this circle, since it can’t move in any other direction and still lie on the circle. Since the tension force due to the string is parallel to the radius, it doesn’t serve any purpose besides keeping the mass on the aforementioned circle.

The only thing that we really care about is the component of gravity tangential to this circle. This yields

By Newton’s second law,

where is the second time derivative of

. Setting these equal, we get a second-order differential equation in

:

Oftentimes, the next step is to assume that , which makes this a simple harmonic oscillator. I’m not going to do that here, since we will be using Mathematica to solve the dynamics numerically. However, we note that the dynamics somehow don’t depend on mass. No matter how massive the pendulum is, it will still oscillate at the same rate. The dynamics of the pendulum depend only on

(which is different on different planets) and

, the length of the pendulum.

However, critically, we have this picture of a mass which, at any given time, looks around at what it forces are acting on it, moves accordingly, and then repeats. This sounds like a dumb question at first, but is there another way to think about this?

Part 2: The Lagrangian Formulation

In most cases that we care about, we can talk about a potential which only depends on position, or coordinates, of everything. Critically, it does not depend on the velocity of anything, or the rates of change of any of the coordinates. This can’t describe every problem in exactly the way we would like to do sometimes, but will work if your forces are conservative, meaning that, if you apply forces on a thing and it ends up somewhere else, its energy will not depend on the exact path taken to get there.

In this section, I’m going to describe how you derive the equations of motion using the Lagrangian formalism. I won’t go into why these things are true (in no small part because I’m still a beginner), but it all has to do with this idea called the principle of least action, which states that the “path” taken by a system in configuration space (basically, the space of your coordinates) will be the one where the action is stationary. The action is the time integral of the all-important Lagrangian , defined as

where is the kinetic energy. What’s weird about this is that there is a negative sign in between the kinetic and potential energies. For some reason, for each coordinate

that you have, it is true that

Moreover, it can be shown that you don’t even need to use a Cartesian or Cartesian-like coordinate system, which may be seen as a shortfall of Newton’s force vector adding picture. You can pick any coordinate system you want, as long as it works as a coordinate system. Critically, in the pendulum problem, we only need one coordinate: . This is because we have some idea about the limitations of the motion of the mass in the system’s configuration space. Since we only actually need to know one number to know what the system looks like at a given time, the system is said to only have one degree of freedom.

This is a bit abstract, so let’s try to solve the pendulum problem with this formalism. We first find the kinetic and potential energies, which we can make short work of:

and

Then the Lagrangian is very easy to write:

We can then compute the first derivative we need on the left-hand side of the Euler-Lagrange equation (in this case, there is only one). The way this is going to work is, since we’re doing some partial derivatives, a partial derivative in ignores

, and vice versa. We see that

As a side-note, the above expression is also called the canonical momentum conjugate to

. Even though it’s called the canonical momentum, it doesn’t have to be what we consider a real “momentum” and may not even have units of momentum (as is the case here). The canonical momentum can and often does depend on the choice of coordinates. In this case, if we look closely, we see that

is actually just the angular momentum of the pendulum mass about the axis of rotation. That’s awfully neat, isn’t it?

Anyway, we take the time derivative of this thing, getting

The right-hand side can also be easily computed as

Setting these equal and multiplying over factors, we get our answer:

Well, that looks familiar. It’s as if it were giving the same answer as we got using the Newtonian formalism. While this question wasn’t too hard to do in the Newtonian formalism, we can see how the Lagrangian formalism is often way, way easier to deal with than the Newtonian formalism. We can choose our coordinate systems to be as convenient as we please, we work with energies rather than forces, and doing out the Euler-Lagrange equations only requires that we do a bunch of derivatives. How else can we think about this problem?

Part 3: The Hamiltonian Formulation

The Hamiltonian formulation is a different formulation of classical mechanics which students often first encounter in quantum mechanics. In Lagrangian mechanics, we think about configuration space, which is, again, just the space of coordinates. However, to know everything about the system, we also need to know the instantaneous time rate of change of those coordinates. For example, knowing for the pendulum is not sufficient to know everything about the system. I would also need to know

, and the equations of motion will tell me

.

However, we can also work in phase space, the space of coordinates and canonical momenta. If the configuration space as dimensions, then the phase space has

dimensions,

of which are coordinates and

of which are canonical momenta, one per coordinate. Unlike for configuration space, if you know where a system is in phase space at a given time, you know literally everything there is to know about the system. In theory, you know its past, present, and future.

With this vague, hand-wavy motivation, we define the Hamiltonian:

Wait, you might ask, isn’t this just the energy? Well, yeah. But we like to use fancy words, and, even though I’m a proponent of using silly words to describe fun physics, even I can’t dodge the prevalence of this word. There’s probably more nuance to this, like that the Hamiltonian is the function that assigns an energy quantity to each point in phase space, but whatever. The Hamiltonian shows up in Hamilton’s equations, an almost symmetric pair of equations, two per coordinate (as opposed to one per coordinate, as in Lagrangian mechanics). Hamilton’s equations are

where the negative sign breaks the symmetry. Once worked out, Hamilton’s equations give the equations of motion through phase space. You can use this to get back to the result from Newtonian and Lagrangian mechanics, but there are times where you wonder why you would even want to do that since the phase space picture is kind of beautiful.

We can write down the Hamiltonian for the simple pendulum system:

But we have to be a bit careful. We don’t really want to make reference to directly. Fortunately, we remember from before that

so that

This means that the Hamiltonian is also equal to

which is a form that we like. The first of Hamilton’s equations gives

Notice that this is just the tangential force of gravity on the pendulum ball multiplied by the length of the string. It’s the torque. This makes sense, because the time derivative of the angular momentum is torque, which is exactly what is being said above.

The second equation gives

If you stare closely, you see that this is just the definition of the canonical momentum. Nothing wrong here. Indeed, we see that this is a system of two first-order coupled ordinary differential equations.

Generally speaking, you have a choice. You can go with the Lagrangian formalism, in which case, for coordinates, you end up with

differential equations second-order in time. You can also go with the Hamiltonian formalism, in which case you would instead end up with twice as many differential equations (

) which are only first-order in time. This is often a good trade. I was also once told that Hamiltonian mechanics is “symplectic,” which was supposed to mean that the errors in numerically solving the equations of motion would be bounded. I don’t know the details exactly, but we will later see what is meant by this.

If you insist on recovering the equations of motion from before, we can take the time derivative of the second equation of motion we found:

We then substitute in for the first equation of motion:

Déjà vu, right?

Or you could just go with the Newtonian formalism, if you should really insist on hating yourself.

The Squishy Pendulum

In this section, I’ll describe how I go about solving for the dynamics of the squishy pendulum. I’m not going to treat it in the Newtonian way, because one should not draw free-body diagrams in public, and because, despite all signs to the contrary, I do not, in fact, hate myself.

Part 1: The (Squishier) Lagrangian Formulation

In order to solve the squishy pendulum using the Lagrangian formulation for classical mechanics, we basically do the same thing we did for the common pendulum. However, there’s one really big difference: before, in order to know what the pendulum “looked like” at a given time, you needed to know only one number (). Now, for the squishy pendulum, you need to know two:

and

, the latter of which is the (possibly negative) displacement of the spring mass from the equilibrium length of the spring. The configuration space of the squishy pendulum is two-dimensional.

If you want to know everything in the squishy pendulum’s past, present, and future, you would have to know four numbers, the two coordinates and their instantaneous rates of change, rather than the two numbers of the common pendulum.

First, we have to work out the kinetic energy term. Upon some thought, we see that we can break up the velocity of the spring mass into a component parallel to and perpendicular to the spring. Then, we can use the Pythagorean theorem to get the total squared speed:

The potential energy term now has two terms, one for the gravitational potential energy and the other for the potential energy of the spring:

where is the spring’s aptly named spring constant. The Lagrangian is then, straightforwardly,

Since the configuration space is two dimensional, we now have two Euler-Lagrange equations to solve:

As before, we can calculate the canonical momenta:

This is one of those times where both canonical momenta are easily interpretable. As before, turns out to be the angular momentum about the point where the spring is attached to the ceiling.

turns out to be the linear momentum along the direction of the spring. We can count ourselves lucky that we can readily make sense of what these canonical momenta mean in this problem.

The time derivatives of these canonical momenta are

Next, we compute the right-hand sides of the Euler-Lagrange equations:

We can retrieve our equation of motion involving :

We see that the term is very reminiscent of the sole term in the analogous equation of motion when solving for the common pendulum’s dynamics. Note that, if and

, the equation instantaneously looks like the one for the common pendulum. The second term on the left, then, is some of the dependence of the second time derivative of

on the speed at which the spring is stretching or contracting,

, which is somewhat interesting to note.

We can write the equation of motion involving :

For those who have done some work with springs before (what self-respecting physicist hasn’t?), the third term appears in many problems involving oscillating springs. If we ignore the other terms on the right-hand side, we have the equation of a simple harmonic oscillator. However, there are other terms that complicate things a bit. The second term is just the influence of gravity on , and the first term is some dependence of

on

. Maybe this is the most boring fact in the world for everyone else, but I think that the fact that these two coordinates,

and

, “talk to each other” and care about how fast the other is changing is pretty interesting.

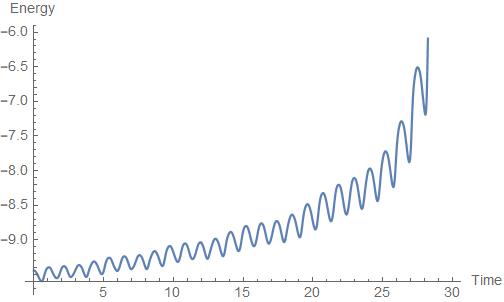

What does this actually look like? Well, Mathematica enables us to solve this pair of coupled, second-order differential equations. For some initial conditions, the solution looks robust enough:

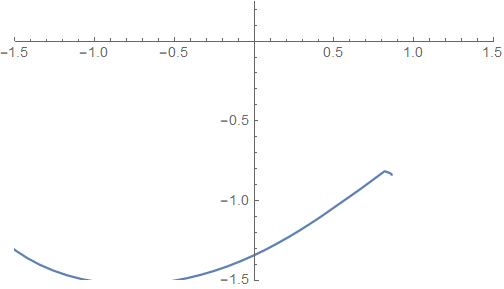

The trajectory traced out looks like this:

Although if you watch it for long enough, the pendulum spazzes out at the end and flies off to infinity. Indeed, if we look closer, we see that the energy of the system gradually creeps up due to a numerical artifact:

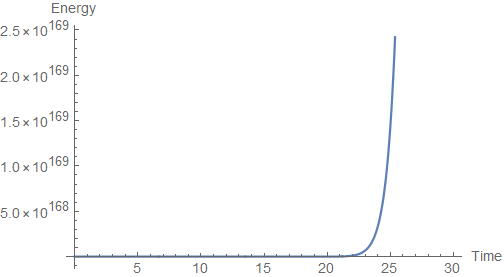

While the numerical errors aren’t bad for the first few seconds, they quickly spiral out of control. Maybe you can claim that this isn’t too much of a problem, but it quickly gets out of hand for more demanding initial conditions:

I mean, like I said, I’m no expert in either Lagrangian mechanics or computational physics, but I’m pretty sure that, when my energies start being on the order of , we’ve got some issues. So do we give up? No. We push forward and, against all sensible arguments that it maybe isn’t healthy to be simulating pendulums at 1 am, resolve ourselves to simulate the dynamics of a squishy pendulum more robustly.

Part 2: The (Squishiest) Hamiltonian Formulation

As with the common pendulum, we can solve for the dynamics of the squishy pendulum in the Hamiltonian formalism. Using the Hamiltonian formalism, we consider the phase space, which is now four-dimensional (two for the two coordinates, and another two for their time rates of change). Instead of getting two coupled second-order differential equations, we will get four coupled first-order differential equations.

Is this better, we’ll see.

In concept, deriving the equations of motion in the Hamiltonian formalism isn’t that hard. Using the expressions for the canonical momenta and expressions for kinetic and potential energy we derived before, we can use the following substitutions to write down the Hamiltonian:

(1)

(2)

The Hamiltonian becomes

There will now be four Hamilton equations:

(1)

(2)

(3)

(4)

We can go through these equations one by one. The first equation motion reads like this:

The left-hand side here is the time derivative of the angular momentum, which is also known as the torque. The magnitude of the torque is some length times some force, which we can see is what’s happening here (with being the length and

being the force).

For the second equation, we obtain

which can really just be rearranged to give us the definition of the canonical momentum conjugate to that we already knew.

The third equation looks like this:

There are a few terms here to try and interpret. The second two terms are pretty straightforward: the second term is the tendency of gravity to accelerate the mass down ( is the force on the pendulum mass in the direction parallel to the spring), and the third term is the tendency of the spring to try and reverse displacements from equilibrium. The first term is a bit harder to figure out, but, after some thinking, we realize that it essentially is the same thing as

where

and

is the direction of the pendulum mass’s motion perpendicular to the spring. It’s basically a sort of centrifugal force term.

The fourth and final equation of motion looks like this:

In perhaps the nerdiest anticlimax ever (is that a thing?), we have retrieved a pretty boring relation between the velocity and momentum along the direction of the spring.

If you want to check (why?!?), you can show that these four equations of motion are equivalent to the two equations of motion derived earlier using the Lagrangian formalism. Therefore, we don’t expect the numerical solver to tell us anything different, right?

And, it seems fine. Betrayal! But the good kind. The numerical solver was somehow able to come up with a much more robust solution for the Hamiltonian formalism than the Lagrangian formalism. We can take a look at the trajectory to be sure:

The trajectory looks way cleaner, and the energy oscillates due to numerical errors with a very small amplitude (while the energy isn’t perfectly constant with time, it sure as hell doesn’t change all that much). What if we try crazier initial conditions?

Alas, now the trajectory appears so clean that we can start to see a beautiful pattern emerge. Somehow, the Hamiltonian formalism’s four first-order equations of motion in phase space are less prone to errors than the Lagrangian formalism’s two second-order equations of motion in configuration space.

One of my professors once briefly mentioned in passing that the Hamiltonian formalism is cool because it is “symplectic,” which apparently meant something like there were bounded errors or something. My intuition for why this is better is that, since the Hamiltonian formalism really revolves around the conservation of energy (heck, the Hamiltonian is the energy), there is some deeper process which causes that conservation law to be preserved. I’m sure there’s more to it.

Concluding Remarks

For comparison, I’ve animated the numerical solutions of both sets of equations of motion on the same plot, for both sets of initial conditions:

Now, I mean, you tell me what’s better?

Obviously, there’s more to classical mechanics than this. People have written down Lagrangians describing all of physics as we know it today, and these more advanced formalisms have been applied to various research problems as well as more elegant presentations of electromagnetism and general relativity. Perhaps that’s the kind of physics one would call “sexy,” but there’s beauty in seeing how more advanced concepts figure into such simple problems as the classic mass on a spring.

The Mathematica notebook containing the simulations can be found here.